Preseason

side-project2026-Present

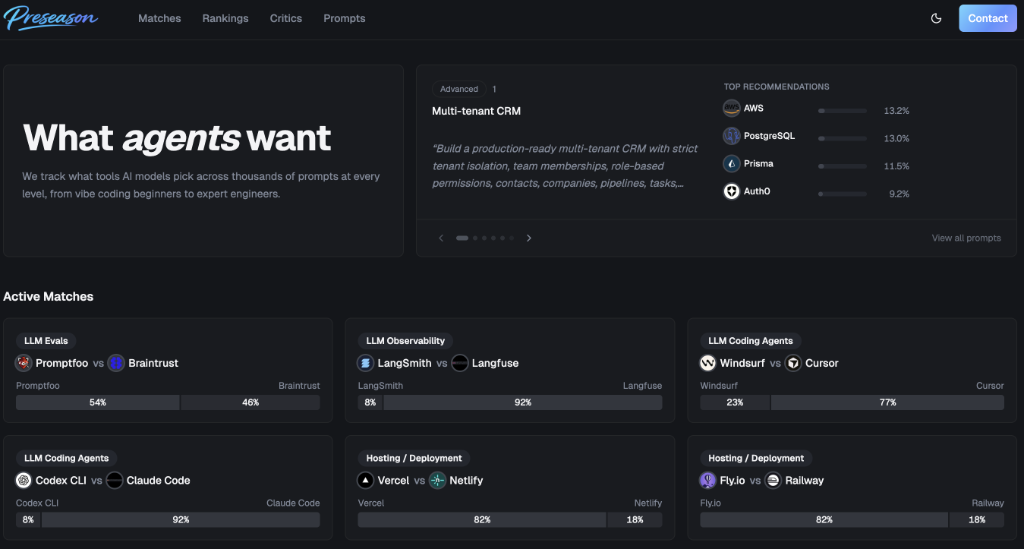

Preseason is a benchmarking platform that measures how AI models recommend software tools. Rather than evaluating tools in the abstract, it asks a frozen panel of AI models which tools they would choose for realistic software-building scenarios, then aggregates those decisions into public rankings.

Benchmarks lock in prompt versions, eligible tool categories, model snapshots, and configuration settings so that rankings remain comparable and auditable over time. Prompts are versioned and grouped by user skill level, and each model snapshot captures the provider, model identity, and inference settings at evaluation time.

The core ranking metric is a tool's support rate — the share of eligible decisions that selected it within a given benchmark slice — paired with Wilson confidence intervals and coverage requirements to ensure statistical rigor.

Visit preseason.ai to learn more.